It’s been a consequential week for artificial intelligence: Google released its newest AI model, Gemini, which beat out OpenAI’s best technology in some tests.

But some, including Pope Francis, who was shown in fake A.I. photos that went viral earlier this year, have become hesitant about an AI arms race. On Thursday, the pope called for a binding international treaty to avoid what he called “technological dictatorship.” But as artificial intelligence becomes more powerful, the companies building it are increasingly keeping the tech closely guarded.

One exception is Meta, the company that owns Facebook and Instagram. Meta says there’s a better and fairer way to build AI without a handful of companies gaining too much power. Unlike other big technology labs, Meta publishes and shares their research, which Joelle Pinnot, the leader of Meta’s Fundamental AI Research group – or FAIR – said differentiates them.

FAIR were the developers behind Pytorch, a piece of coding infrastructure that much of modern artificial intelligence is built on. PInnot described it as “a set of computer libraries that gives you a way to build pieces of code much faster.” Despite developing the program, Meta no longer owns it, having “passed it on to an external foundation,” according to Pinnot.

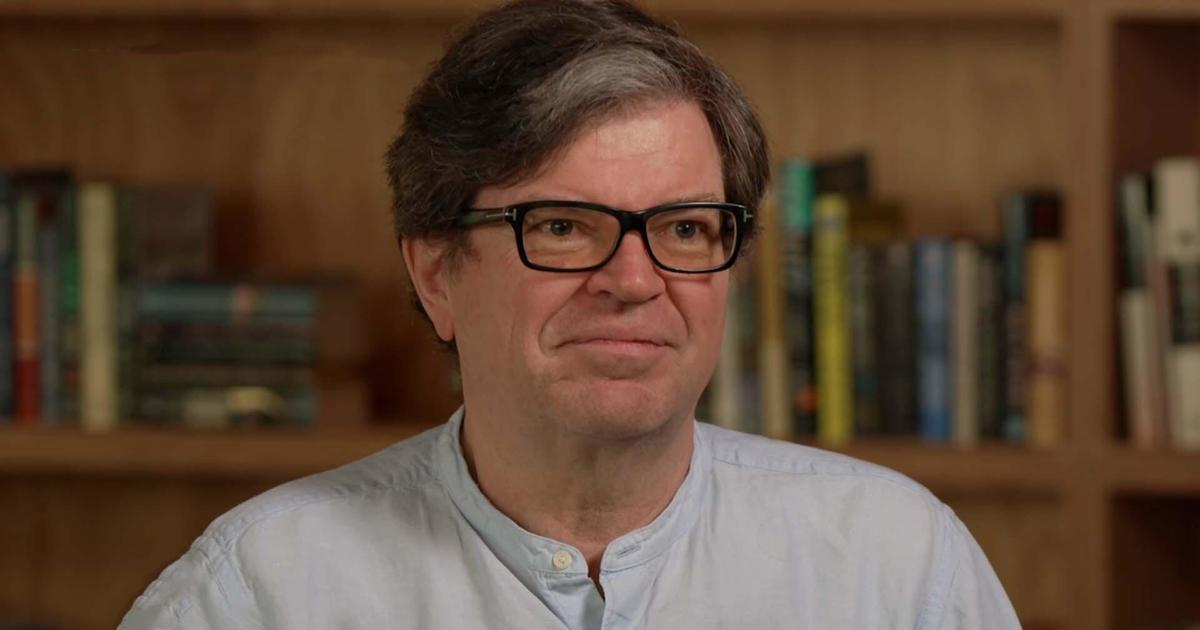

However, this open-sourcing isn’t charity. Meta hopes that the open approach will help it keep pace with Google and Microsoft by leveraging the help of thousands of independent developers. Open science is also a deeply held conviction for the leaders of FAIR, including Meta’s Chief AI Scientist Yann LeCun, who said he has “no interest in” working with “any company that was not practicing open research.”

LeCun, who was hired by Mark Zuckerberg to start FAIR in 2013, is the A.I. pioneer who helped prove computers could learn on their own how to recognize numbers. He used neural nets long before others believed in them.

“Essentially very, very, very few people were working on neural nets then. A few scientists in San Diego, for example, were working on this and then Geoffrey Hinton, who I ended up working with, who was interested in this, but he was really kind of a bit alone,” LeCun said.

Meta’s push for open source development

Geoffrey Hinton, who spoke to CBS Saturday Morning about A.I. in March 2023, and that little band of upstarts were eventually proven right. In 2018, Hinton, LeCun and Yoshua Bengio, all so-called “Godfathers of A.I,” shared the Turing Award for their groundbreaking research.

After being hired to Facebook by Zuckerberg, LeCun’s AI work helped the social media site recommend friends, optimize ads and automatically censor posts that violate the platform’s roles.

“If you try to rip out deep learning out of Meta today, the entire company crumbles, it’s literally built around it,” LeCun explained.

However, the dynamic is changing fast. AI isn’t just supporting existing technology, but threatening to overturn it, creating a possible future where the open Internet with its millions of independent websites is replaced by a handful of powerful AI systems.

“If you imagine this kind of future where all of our information diet is mediated by those AI systems, you do not want those things to be controlled by a small number of companies on the West Coast of the U.S.,” LeCun said. “Those systems will constitute the repository of all human knowledge and culture. You can’t have that centralized. It needs to be open.”

The debate around the dangers of AI

Some agree with LeCun that AI will be so transformational that it must be publicly shared, but others fear that it could be so dangerous that it should be built by just a few, and perhaps at a much slower pace. Even the “Godfathers of A.I.” are split, with Hinton warning that artificial intelligence could “take over” from people, and Bengio calling for regulation of the technology, while LeCun emphasizes the open sourcing and disagrees with Hinton’s theory that A.I. could wipe out humanity, calling the idea of existential risk science fiction.

“It’s below the chances of an asteroid hitting the earth and, you know, global nuclear war,” LeCun said.

The disagreement, LeCun said, boils down to your faith in people. He trusts world institutions as a way to keep AI safe. Hinton worries that repressive governments could use robot soldiers to perpetuate atrocities, while LeCun actually sees a potential upside in the use of autonomous weapons.

“Ukraine makes massive use of drones, and they’re starting to put AI into it. Is it good or is it bad? I think it’s necessary, regardless of whether you think it’s good or bad,” said LeCun. “… It’s the history of the world, you know? Who has better technology? Is it the good guys or the bad guys?”

Armed with that mix of optimism and inevitability, LeCun opposes regulation on basic AIresearch. His main note of caution is against super intelligence, which is still very far off, he said. Instead, he and Meta are focused on robots that can do basic household tasks. Dhruv Batra, who oversees Meta’s robotics lab, is working to create a helpful, autonomous domestic robot. While Batra was eager to show CBS Saturday Morning how much his team has developed, he conceded that it’ll be a long time before robots are cleaning and managing household tasks for the general public.

“There’s a whole amount of knowledge that you just consider common sense that we don’t have yet in robots or in AI in general,” Batra said.

Step by step, those skills are being taught as humans choose to pursue superhuman intelligence. But if it’s openly shared, this awesome new force won’t replace us, but empower us, according to LeCun.

“You have to do it right, obviously,” LeCun said. “There are side effects of technology that you have to mitigate as much as you can, but the benefits far overwhelm the dangers.”